When Mary Wollstonecraft Shelley penned the cult classic Frankenstein, she referred to the story’s ill-fated protagonist as “The Modern Prometheus,” directly referencing the Greek Titan known for creating mankind.

Prometheus loved humans, so much so that he stole the gift of fire from Mount Olympus and passed it on to the lowly humans who were neglected by the Gods. His generosity, however, was to undue him and curse him for the rest of his existence.

Frankenstein would also share this fate after taking on the role as creator, a responsibility that ended up being too much to bear.

And now, after centuries of reiterating the same theme, we too are becoming the modern Prometheus. Our ability to create sentient life is edging closer to reality as our understanding of artificial intelligence continues to grow. But will it be our greatest burden or our greatest accomplishment?

The history of artificial intelligence goes back farther than people realize. Throughout history, we seem to have latched onto the idea of creating minds that surpass ours, capable of understanding realities we can’t.

But, where does it all come from? Why do we have this fascination? And will we ever accomplish feats that live up to the old myths and stories of Prometheus?

Let’s start from square one.

Finding its roots in ancient thought

The conception of artificial intelligence has existed since ancient civilization, where Greek poets and writers imagined fantastical beings manufactured by deities. The most popular being Hephaestus, god of the smiths, who created mechanical servants, and the “bronze man Talos.”

Artificial intelligence also has its roots in Aristotelian syllogistic logic which helped future mathematicians transform “propositions into a precise formal language that can be understood by computers.”

These precursors to artificial intelligence would later spring forth the crudest and earliest attempts at creating a synthetic mind. While difficult to believe, it’s evident that humans were deeply interested in the subject even hundreds of years ago.

In the 13th century Albertus Magnus and Roger Bacon created what can only be described as the first talking machine. The strange and uncanny looking device, the Simulacra, included a prosthetic head attached to a mechanism controlled by a person.

The technology was so new and foreign to people that it was considered to be connected to magic. It was also considered heretic as it directly opposed Christian ideas of God being the sole previous inventions, being that they were real, this old myth is still significant in that it proposed the idea of creating sentient beings embedded with the need to help people.

The story goes as such:

“The legend continues that the Maharal went down to the riverbank and there made a man’s shape from the clay. The Maharal used his knowledge of Kabbalistic teachings specifically the secrets from the “Sefer HaYitzirah” (the Book of Creation) to bring life into the clay form. When the clay man came to life and rose, the Maharal sent him to protect the Jews.”

Golem was essentially the 16th century Frankenstein, only he wasn’t created as a means to satisfy curiosity, but instead as a means to aid human life, to prolong it and protect it. These themes would later pop up in modernity as AI would take on the role as Golem.

Many of these early adoptions of artificial intelligence, however, were considered extremely heretical, a by-product of a time where secularity meant absolute betrayal to the status quo. All of this greatly affected the way people looked at artificial intelligence, often relegating it to a demonic or fantastical process only to be studied by troubled souls and traitors to the faith.

That is until light was brought into the world.

A light bulb appears: The Enlightenment

Following the 17th century, Western thought changed dramatically, introducing the age of objectivity and secularity. The influence of the church waned significantly ushering in whole new ways of governing and thinking.

People, more prominently upper class aristocrats, began contemplating philosophical conundrums that would later pop up in the advancement of artificial intelligence. The most significant shift in thought was the idea that the world could be examined like a machine, a modular mechanism having parts that contribute to a whole.

Thomas Hobbes was one of the early thinkers that pushed this idea in his famous work The Leviathan which depicted government and society as a gigantic structure with cogs and machinations, each contributing and creating a whole governed by an overruling sovereign.

Rene Descartes, known for writing “I think therefore I am,” began interpreting animals and life as nothing more than complex machines. This became known as Cartesian mechanism, a line of thinking that would later be broken down as science and technology began explaining the nuance and chaotic nature of life.

Along with progressive thinking, there were engineering breakthroughs during this period as well. Sir Samuel Morland, at around 1673, began creating machines capable of solving simple arithmetic such as addition, subtraction, multiplication, and division. These primitive calculators signified a growing trend towards mechanizing simple tasks.

Morland’s calculators were then improved by Gottfried Willhelm von Leibniz who not only developed the calculator but advocated the binary system which he thought was ideal for

The first real artificial intelligence came from Herbert A. Simon and Allen Newell. It became public during a lecture where Dr. Simon famously said to his class at Carnegie Institute of Technology, “Al Newell and I invented a thinking machine.”

This thinking machine was a program dubbed Logic Theorist. It was different from all the other computers at the time whose sole purpose was to import and export large amounts of data.

The Logic Theory machine and the General Problem solver programs would go on to serve as the base of all future artificial intelligence research. Simon predicted that “computer chess would surpass human chess abilities within ‘ten years.’” When in reality, that transition took about forty years.

Nevertheless, forty years later we’re greeted with IBM Deep Blue, the first computer to beat the world chess champion after a six-game match. The match lasted several days and headlines all around the world shocked the masses as artificial intelligence finally showed its teeth.

Computer science became an even more serious area of study that day, pushing the boundaries of what we thought possible. People began seeing its application, its potential for convenience and optimization.

Modern Day Artificial Intelligence

Nowadays, artificial intelligence is nothing short of genius. It’s being used in nearly every industry including medical diagnosis, stock trading, robot control, law, remote sensing, scientific discovery, and toys.

It’s fascinating to think about how far we’ve come technologically. We now have algorithms capable of learning, such as Google’s Deepmind, which can “perform well across a wide variety of tasks …including but not limited to deep neural networks, reinforcement learning and systems neuroscience-inspired models.” These are machines that can truly think. Indeed, they’re not perfect but they are so close that it’s beginning to feel uncanny.

As we continue to master the intricacies of artificial intelligence, however, the moral and ethical implications begin to surface with increasing difficulty. A question we may never have an answer to is whether or not a robot can have a “soul.” Can it truly be sentient despite being pre-programmed by human hands? Do we even have the authority to determine what is and isn’t living?

There are also widespread fears of being overruled by machines with AI, a theme that has been thoroughly explored in the Matrix trilogy and numerous other science fiction novels and films. People also seem to think that AI will eventually replace regular jobs, creating a world that is completely automated and void of work.

Regardless of a possible technological renaissance or an apocalyptic extinction, it’s clear that AI was an inevitability, a product of centuries of human curiosity and ingenuity finally reaching its apex in our modern era.

So with all of that being said, are we truly The Modern Prometheus?

Creator of all things living. In fact, when Albertus Magnus created his talking head invention, it would only later be destroyed by St. Thomas Aquinas who was actually Magnus’ former student.

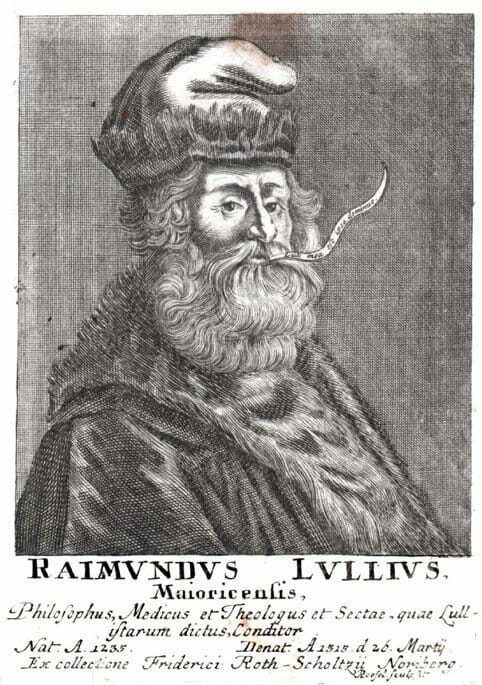

Following the tradition of Aristotle, many intellectuals began making serious breakthroughs in logical and mathematical thinking. A huge contributor to this was Ramon Lull who created one of the first computational machines which was described as thus:

“A typical Lullian machine consisted of two or more disks having a common spindle. Each disk could be rotated independently of the others. A Lullian machine was operated by rotating the two disks independently, much as we would a star finder or (some years ago) a circular slide rule. At any setting of the disks, pairs of God’s attributes would be juxtaposed on the rims of the inner and outer disks. Rotating the disks would create different pairings. One would thus discover that God is Good and Great, Good and Eternal, Great and Eternal, and so forth. The heretic and the infidel were supposed to be brought to the True Faith by these revelations.”

Essentially, Lull advanced the idea that non-mathematical reasoning can be done, or at least assisted, by combinatorics. Lull was able to mechanized a specific form of thought and create a machine to represent that. This would affect the ideas of computational processes greatly, especially processes that involve combining symbols.

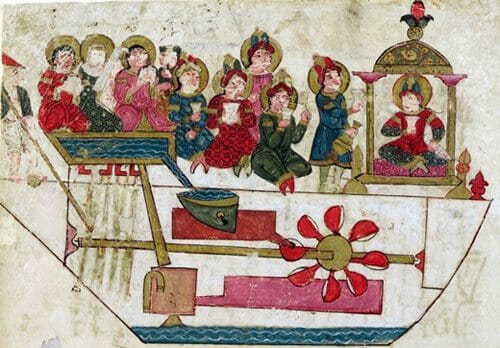

Another highly popular influencer in the world of artificial intelligence was Al-Jazari who created a musical robot band that was automated through the use of hydraulics. It worked by using something called hydraulic switching, the machine also powered a drum that was programmed with pegs that bumped into levers. It was one of the first instances where we got to see something work independently from its creator. Something with a life of its own.

Medieval Times: An Interest is Sparked

Before the creation of Gutenberg’s printing press, many people were intellectually left in the dark. Nobody had access to even the simplest texts like the Bible and because of that people were left toiling away as peasants unbeknownst to the vast knowledge existing outside of their dreary worlds.

However, when books and texts became widely distributed, a renaissance of sorts began brewing. In the time between the 15th century (when the printing press was created) and the 18th century, many of our modern machines began seeing the light of day.

The first modern measuring machines were produced somewhere in the 15th and 16th centuries. Some of the most intricate clock designs were being created, signifying an increasing mastery over design and engineering.

Using the same principles in clock making, engineers began creating mechanical animals. One of the most famous being Leonardo DaVinci’s walking lion which included a giant windup-like toy that operated using gears and wooden planks. For its time, it was incredibly innovative and different from what anyone has seen before.

Another important myth that derives from the 16th century is that of the ‘Golem,’ a creature that was allegedly created by the Mahral of Prague, or Rabbi Judah Loew. While this is unlike the machines “because they require only two digits, which can easily be represented by the on and off states of a switch.”

While the enlightenment was characteristically a time where barriers were being broken down, significant challenges were still unsolved. The promise and vigor of these early thinkers, however, continued to influence modern Western thought. Many concepts like objectivity and the social contract still live on today in societies and democracies around the world. As a result, the sciences remain to be the highest standard of empirical truth.

The Dawn of the Industrial Revolution

From this point on technology began advancing exponentially. As labour migrated from agrarian fields to rustic factories, production sped up rapidly. Goods and materials were being traded at a much faster rate and with advancements in transportation and communication, the world began to feel smaller and more interconnected.

The most famous automation that came out of the 18th century was built by Hungarian engineer Baron Wolfgang von Kempelen who created “The Turk,” a mechanical device shaped like a person that was capable of playing chess. It was so well done that Edgar Allan Poe famously wrote that the Turk could not be a machine because, if it were, it would not lose.

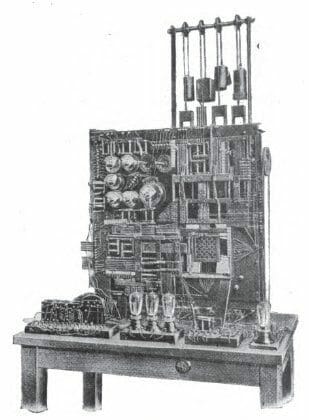

The second machine that played chess was built by Torres Y Quevedo in 1920. This time, however, it wasn’t entirely a gimmick. The machine actually used electromagnets under its board to play the endgame rook and king against the lone king. The description of the machine is as follows:

“Well, not precisely play. But the machine could, in a totally unassisted and automated fashion, deliver mate with King and Rook against King. This was possible regardless of the initial position of the pieces on the board. For the sake of simplicity, the algorithm used to calculate the positions didn’t always deliver mate in the minimum amount of moves possible, but it did mate the opponent flawlessly every time.”

The 18th, 19th, and early 20th century saw a lot of tinkering with machines. Though, the digital age hadn’t quite made its appearance yet, machines were still very rudimentary and limited in scope. This all changed, however, after the WWII.

1950s and Onward!

In 1956, during a Dartmouth conference, the phrase “artificial intelligence” was officially coined thus commencing an entirely new wave of technological development.

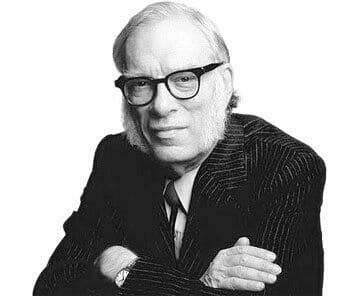

Pop-culture began exploring ideas of sentient robotics, most notably author Isaac Asimov began implementing the idea in his stories. In his popular short story “The Last Question” (1956), an omnipotent machine called the Multivac acts like a modern Google in that it answers all questions directed at it.